Difference between revisions of "Evaluation report"

(→Automatic vs. manual word count) |

(→Automatic vs. manual word count) |

||

| Line 31: | Line 31: | ||

1. Used for partially reviewed files (in order not to split the file into parts and import only the reviewed part). | 1. Used for partially reviewed files (in order not to split the file into parts and import only the reviewed part). | ||

| − | 2. The "Evaluated source words" should reflect the total number of words in the reviewed file. For example, a reviewer reviewed only 1500 words in a 5000-word file. Then they should specify 1500 as "Evaluated source words" and the system will not take the remaining 3500 words into account. | + | 2. The "Evaluated source words" should reflect the total number of words in the reviewed file. |

| + | |||

| + | ''For example'', a reviewer reviewed only 1500 words in a 5000-word file. Then they should specify 1500 as "Evaluated source words" and the system will not take the remaining 3500 words into account. | ||

3. The system will display all the corrected segments. So, if the file is large, the evaluator will have to evaluate way more segments. | 3. The system will display all the corrected segments. So, if the file is large, the evaluator will have to evaluate way more segments. | ||

Revision as of 16:51, 20 January 2022

Contents

General information

After the evaluator uploaded files, they can start the evaluation.

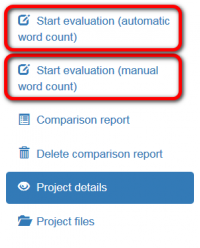

The evaluator can start the evaluation whether with automatic word count or enter it manually when starting the process:

For more info on both methods, please check the relevant sections below.

Automatic vs. manual word count

Automatic:

1. Used for fully reviewed files.

2. The "Evaluation sample word count limit" is used to adjust how many segments for evaluation will be displayed.

3. The system will display only the segments with the total word count specified as "Evaluation sample word count limit".

For example, if 1000 was specified as "Evaluation sample word count limit", the system will display around 100 segments with around 1000 words in total (of course, it may vary depending on the size of segments).

Note: If the evaluator specifies 1000 as "Evaluation sample word count limit" while there are only 500 words in all corrected segments (let's say, there are 900 words in the file), the system will still display corrected segments with around 500 words in total. It means that 1000 can be safely used as "Evaluation sample word count limit" even if the real total word count is lower.

4. When calculating the score, the "Total source words" is used.

For example, if the evaluation report includes corrected segments with around 1000 words and it the total source words is 2130, 2130 will be used in the formula.

Manual:

1. Used for partially reviewed files (in order not to split the file into parts and import only the reviewed part).

2. The "Evaluated source words" should reflect the total number of words in the reviewed file.

For example, a reviewer reviewed only 1500 words in a 5000-word file. Then they should specify 1500 as "Evaluated source words" and the system will not take the remaining 3500 words into account.

3. The system will display all the corrected segments. So, if the file is large, the evaluator will have to evaluate way more segments.

4. When calculating the score, the "Total source words" is used. In this case, "Evaluated source words" = "Total source words"

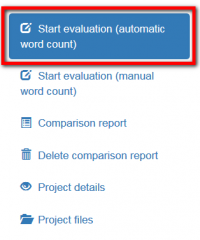

Start evaluation (automatic word count)

If you select this option, the system will display randomly selected segments containing only corrected units for evaluation:

Then you may configure the evaluation process:

- Skip repetitions — the system will hide repeated segments (only one of them will be displayed in this case).

- Skip locked units — the program will hide "frozen" units. For example, the client wants some parts, extremely important for him, stayed unchanged. Besides, extra units slow the editor’s work down.

- Evaluation sample word count limit — the number of words in edited segments chosen for evaluation.

Having applied the settings you need, press "Start evaluation" to initiate the process.

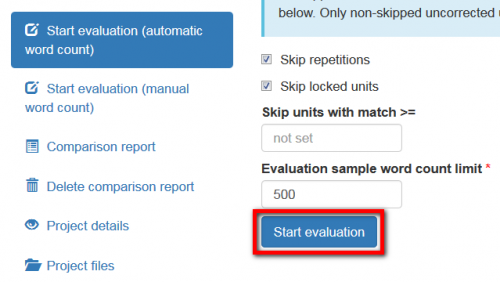

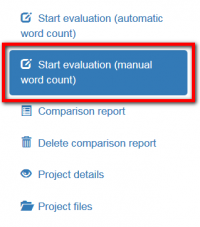

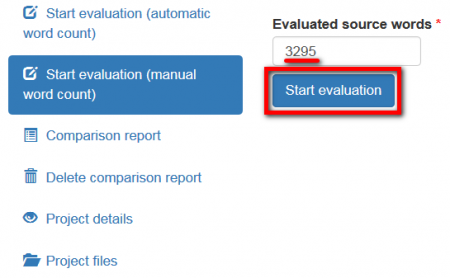

Start evaluation (manual word count)

If the word count given by the system does not correspond to the word count you want, you may manually enter the total word count before starting evaluation.

To do this, press "Start evaluation (manual word count)":

Enter the number of evaluated source words:

Then press "Start evaluation" and the system will display all corrected segments of the document.

Thus, you will be able to select the segments for evaluation on your own.

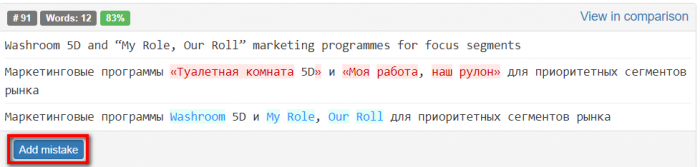

Mistakes

You can Add mistake:

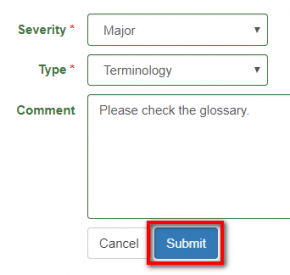

You may describe it:

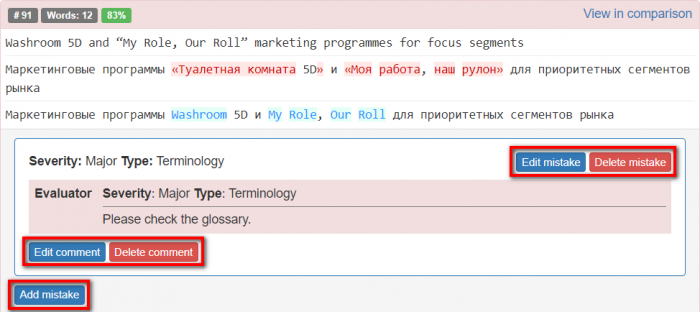

You can edit, delete mistake/comment or add another mistake by clicking the corresponding buttons:

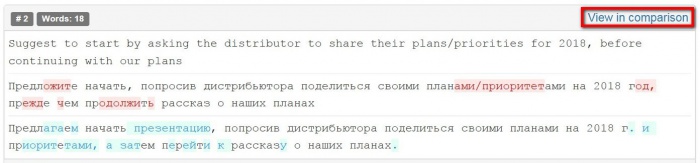

- View in comparison — this link redirects you on the page with the Comparison report:

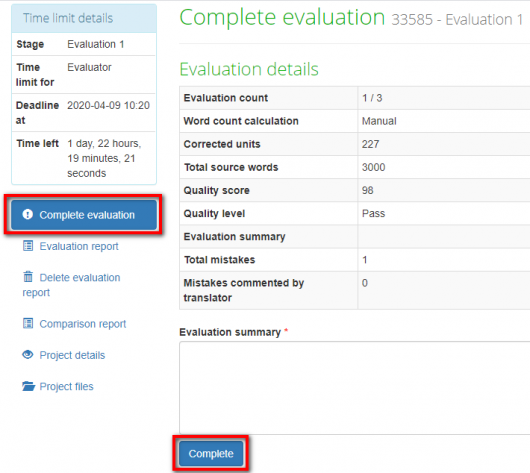

When the mistakes classification is done, the project evaluator has to press "Complete evaluation":

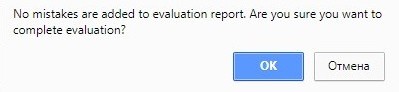

Note: If you press "Complete" and no mistakes are added to the report, the system will warn you:

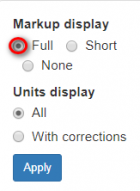

Markup display

Markup display option defines tags display:

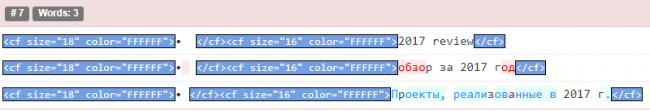

- Full - tags have original length, so you can see the data within:

- Short - tags are compressed and you see only their position in the text:

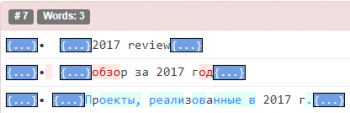

- None – tags are totally hidden, so they will not distract you:

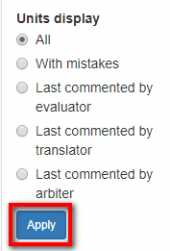

Units display

- All - all text segments are displayed.

- With mistakes - only with mistakes text segments are displayed.

- Last commented by evaluator - only last commented by evaluator text segments are displayed.

- Last commented by translator - only last commented by translator text segments are displayed.

- Last commented by arbiter - only last commented by arbiter text segments are displayed.

Press "Apply" after changing the preferences:

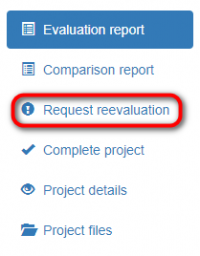

Reevaluation and arbitration requests

When mistakes classification is done, the project evaluator has to press "Complete evaluation" => "Complete",

and the system will send the quality assessment report to the translator.

When the translator receives this report and look through classification of each mistake, he may Complete project

(if agree with the evaluator (in this case, the project will be completed)) or Request reevaluation (if disagree):

The project will be sent to the evaluator, who will review translator’s comments.

If they are convincing, the evaluator may change mistake severity. The translator will receive the reevaluated project.

The translator can send this project for reevaluation one more time.

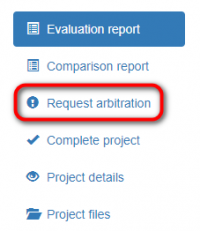

If an agreement between the translator and evaluator wasn’t reached, the translator can send the project to the arbiter

by pressing "Request arbitration" (it appears instead of "Request reevaluation"):